Intel, AMD to Team up on 8th Generation

Mobile Chips BY JOHN BUREK, MATT SAFFORD

Dogs and cats living together? Intel and AMD in one chip? We’d have bet first on the felines and canines hooking up. A blockbuster blog post from Intel’s Christopher Walker, vice president of the Client Computing Group and general manager of the Mobile Client Platform, and backed by reporting from several other outlets confirms the previously unthinkable. Intel and AMD will join forces on a high-performance mobile CPU that will combine Intel’s Core processor technology and AMD’s discrete graphics.

It is unclear whether this will be a single version of a single chip or a series of CPUs. Intel is cagily referring to this rollout as a “new product, which will be part of our 8th Gen Intel Core family.” Even though this new chip or chips will service just a niche of the market—thin-design laptops that require high- performance graphics, presumably including some gaming machines—this is a watershed moment in the landscape for mobile CPUs and GPUs, with these two bitter CPU rivals collaborating in such an unprecedented way.

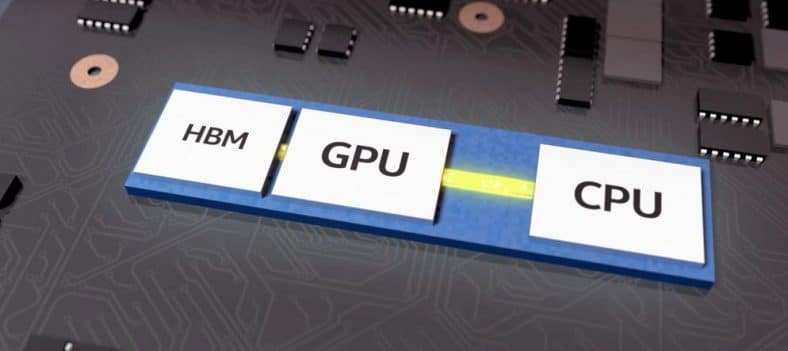

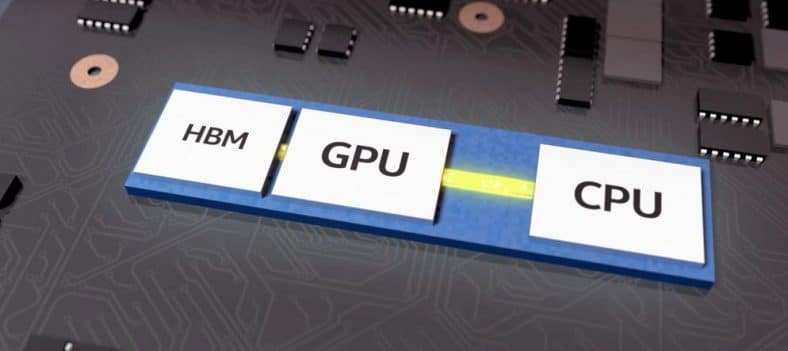

Note that the CPU and GPU elements are combined in one unit; this is distinct from the typical design in a gaming laptop or other power machine, in which a CPU with integrated graphics is augmented by a separate, discrete graphics chip, with the two hosted on the mainboard separately.

The Intel/AMD collaboration doesn’t yet have a formal name, and indeed, the details are somewhat sketchy. But here are the key things we know so far.

Intel’s H series laptop chips have been typically seen in gaming laptops and other high- performance mobile machines.

It’s part of Intel’s 8th generation H Series. That much is confirmed. Intel’s H series laptop chips have been the CPUs typically seen in gaming laptops and other high- performance mobile machines. Most laptops using these CPUs are thicker designs, with an inch-thick-or-so profile, and graphics supplied by a discrete Nvidia chip of some flavor. (With rare exceptions. In the mobile workstation market, you might see the occasional model with an Intel H-series chip paired with an AMD FirePro workstation GPU, but that’s the exception rather than the rule.)

The new venture being part of the 8th Generation Core series, we’ll have to assume that the underlying CPU architecture will be Intel’s “Coffee Lake.” The initial wave of 8th Generation mobile CPUs (in the U series, meant for the thinnest and lightest laptops), introduced in Q3 of 2017, employed a tweaked version of the “Kaby Lake” silicon used in Intel’s 7th Generation processors, dubbed “Kaby Lake-R” (the “R” for “Refresh”). Although Intel has not confirmed the provenance of the new Intel/AMD CPU/GPU architectures, we have to imagine it will employ the same Coffee Lake architecture as in Intel’s latest desktop CPUs, such as the Core i7-8700K.

A key aim: slimmer laptops with serious gaming performance. Right off the bat in Intel’s press release about its new chip, the company calls out a reduction in the “Silicon Footprint by More Than 50%, Enabling] Real Time Power Sharing Across CPU and GPU for Optimal Performance.” In part, that means the chip should lead to slimmer, lighter gaming-class laptops. That’s not dissimilar to Nvidia’s “Max-Q” technology, which we’ve seen so far in a few laptops, most recently the Acer Predator Triton 700.

But there’s also a serious focus on the integration of the processor and graphics here that could result in better battery life and the ability to ramp up or down the CPU and GPU sides of the chip in ways we haven’t seen before from a commercial product. According to Intel, the integration of the H Series CPU and Radeon graphics, connected via EMIB (more on this in a moment), will enable “system designers to adjust the ratio of power sharing between the processor and graphics based on workloads and usages, like performance gaming. Balancing power between our high-performing processor and the graphics subsystem is critical to achieve great performance across both processors as systems get thinner.” The TL;DR version of that: flexible power delivery across CPU and graphics, according to the task.

We’ve seen Intel’s CPUs gain the ability in recent years to ramp up and down substantially to tailor performance to a particular task while keeping power consumption at a minimum. This has resulted in huge gains in battery life while increasing performance. If this chip can do something similar with both the CPU and the dedicated-class graphics, it could be a boon for on-the-go content creators, providing workstation-like computing abilities in a slim form factor, with battery life that’s longer than we’ve seen in previous portables with this much muscle.

Of course, for now, this is pure speculation. But there seems to be potential here for a level of performance and efficiency that we’ve never seen from previous devices with separate CPUs and discrete graphics.

Bridging the gap: meet “EMIB.” What’s the magic tech pixie dust that allows Intel’s and AMD’s silicon to work together in (presumable) harmony? Intel says that’s down to a technology called Embedded Multi-Die Interconnect Bridge (EMIB), which the company designed as a more efficient alternative to an interposer. It has already been deployed in some server silicon and allows for modular die designs that allow for a “building block” style of construction.

Interposers, which AMD has used previously in graphics cards such as the AMD Radeon R9 Fury X, tend to be large and expensive, as they form a sort of physical foundation layer that connecting chips plug into and route through. EMIBs, Intel says, are much smaller, “eliminat[ing] height impact as well as manufacturing and design complexities, enabling faster, more powerful and more efficient products in smaller sizes.” The company says its AMD-packing H series is the first consumer product that uses EMIB. If this technology delivers on the above promises, we’re sure it won’t be the last.

Intel has called out HBM2 as the memory type that will be used in concert with the new Intel- AMD chip.

HBM2 is the memory type. The use of dedicated graphics means an allotment of onboard graphics memory, not just a sharing of main system memory for graphics-acceleration purposes. What’s interesting is that Intel has called out HBM2 as the memory type that will be used in concert with the new Intel-AMD chip. In consumer-graphics circles, HBM2 rolled out with the introduction this past summer of AMD’s Vega desktop video cards, in the AMD Radeon RX Vega 64 and Radeon RX Vega 56. It remains to be seen if the implementation of HBM2 here is exactly parallel to that of HBM2 on a desktop video card, given the different, modular design aspects in play here.

One thing to note: Original HBM memory was dogged by issues of supply. It’s less clear whether the same malady has affected HBM2, given that AMD’s Vega cards have been slurped up by cryptocurrency miners with great relish since their debut. But it’s something analysts surely will keep an eye on.

Will it be Vega or something else? One of the many unanswered questions at this stage is which AMD graphics architecture is in play. The use of HBM2 seems to be a strong indication that AMD’s Vega graphics will be used, as that’s the same type of memory used in the company’s high-end Radeon RX Vega 64 and Vega 56 graphics cards. But Vega is also making its way into the company’s new Ryzen Mobile chips, sans HBM2 memory. And on the console side, Microsoft’s just-released high-end Xbox One X uses a completely custom AMD- built graphics chip that borrows from the design of both Vega and previous- generation AMD Polaris chips. The Xbox One X doesn’t use HBM memory at all, instead opting for the GDDR5 memory that’s more prevalent with Nvidia graphics cards these days.

So we could be looking at AMD graphics silicon here that’s very much custom. But given that the new Intel H Series chip, even if it’s wildly successful, will likely sell in far fewer numbers than a gaming console, we suspect that the graphics design AMD created for Intel will stick closer to Vega. The use of HBM2 strengthens that argument. Exactly what kind of performance will the graphics on this chip provide, though? That’s still very much up in the air, as is whether the dedicated AMD graphics will co-exist with an Intel integrated solution, with the ability to switch between for power savings.

Availability: Q1 2018. With no more information on availability, we have to assume that CES 2018 will be the next big data dump on this interesting, groundbreaking niche silicon collaboration. Needless to say, we’re salivating to see this silicon in action.